Computer Systems A Programmer's Perspective 3rd Edition Pdf Download UPDATED

Computer Systems A Programmer's Perspective 3rd Edition Pdf Download

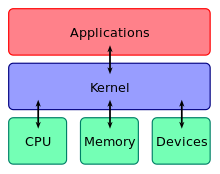

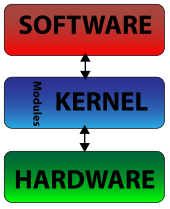

The kernel is a computer programme at the cadre of a reckoner'due south operating system and mostly has complete control over everything in the system.[1] It is the portion of the operating system code that is e'er resident in retention,[2] and facilitates interactions between hardware and software components. A full kernel controls all hardware resources (e.g. I/O, retentivity, cryptography) via device drivers, arbitrates conflicts between processes concerning such resources, and optimizes the utilization of common resource e.g. CPU & enshroud usage, file systems, and network sockets. On most systems, the kernel is one of the first programs loaded on startup (after the bootloader). It handles the balance of startup too equally memory, peripherals, and input/output (I/O) requests from software, translating them into data-processing instructions for the central processing unit of measurement.

The critical lawmaking of the kernel is usually loaded into a split area of memory, which is protected from access by application software or other less critical parts of the operating arrangement. The kernel performs its tasks, such as running processes, managing hardware devices such as the hard disk, and treatment interrupts, in this protected kernel space. In contrast, awarding programs such every bit browsers, word processors, or audio or video players apply a split up area of memory, user infinite. This separation prevents user data and kernel information from interfering with each other and causing instability and slowness,[one] likewise every bit preventing malfunctioning applications from affecting other applications or crashing the unabridged operating system. Even in systems where the kernel is included in application accost spaces, retentivity protection is used to prevent unauthorized applications from modifying the kernel.

The kernel's interface is a low-level abstraction layer. When a process requests a service from the kernel, it must invoke a system call, unremarkably through a wrapper role.

There are unlike kernel compages designs. Monolithic kernels run entirely in a single address space with the CPU executing in supervisor mode, mainly for speed. Microkernels run most but not all of their services in user infinite,[3] like user processes do, mainly for resilience and modularity.[4] MINIX three is a notable example of microkernel design. Instead, the Linux kernel is monolithic, although it is also modular, for information technology tin can insert and remove loadable kernel modules at runtime.

This key component of a figurer arrangement is responsible for executing programs. The kernel takes responsibility for deciding at any time which of the many running programs should exist allocated to the processor or processors.

Random-access memory [edit]

Random-access retentivity (RAM) is used to store both program instructions and data.[a] Typically, both need to be present in memory in order for a program to execute. Often multiple programs will desire admission to retention, frequently enervating more than memory than the computer has available. The kernel is responsible for deciding which memory each process can utilise, and determining what to do when not enough memory is available.

Input/output devices [edit]

I/O devices include such peripherals as keyboards, mice, deejay drives, printers, USB devices, network adapters, and display devices. The kernel allocates requests from applications to perform I/O to an advisable device and provides convenient methods for using the device (typically bathetic to the point where the application does not need to know implementation details of the device).

Resource management [edit]

Key aspects necessary in resource management are defining the execution domain (accost space) and the protection mechanism used to mediate access to the resources inside a domain.[5] Kernels too provide methods for synchronization and inter-process communication (IPC). These implementations may be located within the kernel itself or the kernel can as well rely on other processes it is running. Although the kernel must provide IPC in order to provide access to the facilities provided by each other, kernels must also provide running programs with a method to make requests to access these facilities. The kernel is also responsible for context switching between processes or threads.

Retentivity management [edit]

The kernel has full access to the system'south memory and must permit processes to safely admission this memory as they crave it. Often the first step in doing this is virtual addressing, usually achieved by paging and/or segmentation. Virtual addressing allows the kernel to brand a given physical address announced to be another accost, the virtual address. Virtual accost spaces may exist different for unlike processes; the retention that one process accesses at a particular (virtual) address may exist dissimilar memory from what another process accesses at the same address. This allows every programme to acquit as if it is the only one (autonomously from the kernel) running and thus prevents applications from crashing each other.[6]

On many systems, a program'due south virtual address may refer to data which is not currently in memory. The layer of indirection provided past virtual addressing allows the operating arrangement to use other data stores, like a hard drive, to store what would otherwise have to remain in principal memory (RAM). As a effect, operating systems tin can allow programs to utilise more than memory than the arrangement has physically bachelor. When a program needs information which is not currently in RAM, the CPU signals to the kernel that this has happened, and the kernel responds by writing the contents of an inactive retentiveness cake to disk (if necessary) and replacing information technology with the data requested by the program. The program can then be resumed from the indicate where it was stopped. This scheme is generally known as demand paging.

Virtual addressing too allows creation of virtual partitions of retentivity in two disjointed areas, one beingness reserved for the kernel (kernel infinite) and the other for the applications (user infinite). The applications are not permitted past the processor to accost kernel memory, thus preventing an application from damaging the running kernel. This key partition of retentivity space has contributed much to the current designs of actual full general-purpose kernels and is almost universal in such systems, although some enquiry kernels (east.grand., Singularity) accept other approaches.

Device management [edit]

To perform useful functions, processes demand access to the peripherals continued to the computer, which are controlled by the kernel through device drivers. A device driver is a computer program encapsulating, monitoring and decision-making a hardware device (via its Hardware/Software Interface (HSI)) on behalf of the OS. It provides the operating system with an API, procedures and information about how to command and communicate with a certain piece of hardware. Device drivers are an important and vital dependency for all Os and their applications. The design goal of a driver is brainchild; the part of the driver is to translate the Bone-mandated abstract function calls (programming calls) into device-specific calls. In theory, a device should work correctly with a suitable commuter. Device drivers are used for e.g. video cards, sound cards, printers, scanners, modems, and Network cards.

At the hardware level, common abstractions of device drivers include:

- Interfacing directly

- Using a high-level interface (Video BIOS)

- Using a lower-level device driver (file drivers using deejay drivers)

- Simulating work with hardware, while doing something entirely unlike

And at the software level, device driver abstractions include:

- Allowing the operating organization straight admission to hardware resource

- But implementing primitives

- Implementing an interface for non-driver software such every bit TWAIN

- Implementing a linguistic communication (frequently a high-level linguistic communication such as PostScript)

For case, to show the user something on the screen, an application would make a request to the kernel, which would forrad the request to its display commuter, which is so responsible for actually plotting the character/pixel.[6]

A kernel must maintain a listing of available devices. This list may be known in advance (due east.one thousand., on an embedded organization where the kernel volition be rewritten if the bachelor hardware changes), configured by the user (typical on older PCs and on systems that are non designed for personal use) or detected by the operating organization at run fourth dimension (normally called plug and play). In plug-and-play systems, a device manager first performs a scan on different peripheral buses, such as Peripheral Component Interconnect (PCI) or Universal Series Motorbus (USB), to notice installed devices, and then searches for the appropriate drivers.

As device direction is a very Os-specific topic, these drivers are handled differently by each kind of kernel design, merely in every case, the kernel has to provide the I/O to allow drivers to physically access their devices through some port or retention location. Important decisions have to be made when designing the device management system, as in some designs accesses may involve context switches, making the operation very CPU-intensive and hands causing a significant performance overhead.[ citation needed ]

System calls [edit]

In computing, a organisation telephone call is how a process requests a service from an operating organisation's kernel that it does not commonly have permission to run. System calls provide the interface between a process and the operating system. Most operations interacting with the system require permissions not bachelor to a user-level process, e.grand., I/O performed with a device present on the arrangement, or whatever course of advice with other processes requires the apply of organisation calls.

A organisation call is a mechanism that is used by the application plan to request a service from the operating system. They use a machine-code instruction that causes the processor to alter mode. An instance would be from supervisor manner to protected mode. This is where the operating organisation performs actions like accessing hardware devices or the retention management unit of measurement. Generally the operating organisation provides a library that sits between the operating system and normal user programs. Usually information technology is a C library such as Glibc or Windows API. The library handles the depression-level details of passing information to the kernel and switching to supervisor mode. System calls include close, open up, read, wait and write.

To actually perform useful piece of work, a procedure must be able to admission the services provided by the kernel. This is implemented differently by each kernel, but most provide a C library or an API, which in turn invokes the related kernel functions.[7]

The method of invoking the kernel function varies from kernel to kernel. If retention isolation is in apply, it is impossible for a user process to call the kernel directly, because that would be a violation of the processor's access control rules. A few possibilities are:

- Using a software-simulated interrupt. This method is available on most hardware, and is therefore very common.

- Using a call gate. A call gate is a special address stored by the kernel in a list in kernel memory at a location known to the processor. When the processor detects a call to that address, it instead redirects to the target location without causing an access violation. This requires hardware support, but the hardware for it is quite common.

- Using a special organization call instruction. This technique requires special hardware support, which common architectures (notably, x86) may lack. Organisation phone call instructions have been added to recent models of x86 processors, however, and some operating systems for PCs make employ of them when available.

- Using a retentiveness-based queue. An application that makes large numbers of requests but does non need to expect for the result of each may add details of requests to an area of memory that the kernel periodically scans to detect requests.

Kernel pattern decisions [edit]

Protection [edit]

An of import consideration in the blueprint of a kernel is the support it provides for protection from faults (fault tolerance) and from malicious behaviours (security). These ii aspects are usually not clearly distinguished, and the adoption of this distinction in the kernel design leads to the rejection of a hierarchical structure for protection.[five]

The mechanisms or policies provided by the kernel tin exist classified co-ordinate to several criteria, including: static (enforced at compile fourth dimension) or dynamic (enforced at run time); pre-emptive or post-detection; co-ordinate to the protection principles they satisfy (e.g., Denning[8] [9]); whether they are hardware supported or linguistic communication based; whether they are more an open up machinery or a binding policy; and many more.

Support for hierarchical protection domains[10] is typically implemented using CPU modes.

Many kernels provide implementation of "capabilities", i.e., objects that are provided to user code which permit limited access to an underlying object managed by the kernel. A mutual example is file treatment: a file is a representation of data stored on a permanent storage device. The kernel may be able to perform many dissimilar operations, including read, write, delete or execute, simply a user-level application may just be permitted to perform some of these operations (due east.g., it may simply be allowed to read the file). A common implementation of this is for the kernel to provide an object to the application (typically and so chosen a "file handle") which the application may then invoke operations on, the validity of which the kernel checks at the time the operation is requested. Such a system may be extended to cover all objects that the kernel manages, and indeed to objects provided by other user applications.

An efficient and elementary way to provide hardware support of capabilities is to delegate to the memory management unit (MMU) the responsibility of checking access-rights for every memory access, a machinery chosen capability-based addressing.[eleven] Most commercial computer architectures lack such MMU support for capabilities.

An alternative approach is to simulate capabilities using commonly supported hierarchical domains. In this arroyo, each protected object must reside in an address space that the application does non have access to; the kernel as well maintains a list of capabilities in such memory. When an application needs to access an object protected by a adequacy, information technology performs a system call and the kernel so checks whether the application's capability grants it permission to perform the requested action, and if information technology is permitted performs the admission for it (either directly, or by delegating the request to another user-level process). The performance price of address space switching limits the practicality of this approach in systems with complex interactions between objects, only it is used in current operating systems for objects that are not accessed ofttimes or which are not expected to perform quickly.[12] [13]

If the firmware does non support protection mechanisms, it is possible to simulate protection at a higher level, for case by simulating capabilities by manipulating page tables, but there are functioning implications.[14] Lack of hardware back up may not exist an result, all the same, for systems that choose to apply linguistic communication-based protection.[15]

An important kernel design decision is the choice of the abstraction levels where the security mechanisms and policies should be implemented. Kernel security mechanisms play a disquisitional part in supporting security at higher levels.[11] [16] [17] [xviii] [nineteen]

One approach is to use firmware and kernel support for fault tolerance (encounter above), and build the security policy for malicious behavior on acme of that (adding features such as cryptography mechanisms where necessary), delegating some responsibility to the compiler. Approaches that consul enforcement of security policy to the compiler and/or the application level are often called linguistic communication-based security.

The lack of many critical security mechanisms in electric current mainstream operating systems impedes the implementation of acceptable security policies at the awarding abstraction level.[16] In fact, a common misconception in computer security is that any security policy tin be implemented in an application regardless of kernel back up.[sixteen]

Hardware- or language-based protection [edit]

Typical figurer systems today utilise hardware-enforced rules about what programs are allowed to access what data. The processor monitors the execution and stops a program that violates a rule, such as a user process that tries to write to kernel memory. In systems that lack support for capabilities, processes are isolated from each other by using divide address spaces.[twenty] Calls from user processes into the kernel are regulated by requiring them to use ane of the higher up-described system telephone call methods.

An alternative approach is to utilize language-based protection. In a language-based protection arrangement, the kernel will merely let code to execute that has been produced by a trusted language compiler. The linguistic communication may and then be designed such that it is impossible for the developer to instruct it to exercise something that will violate a security requirement.[15]

Advantages of this approach include:

- No need for divide address spaces. Switching between address spaces is a slow operation that causes a great bargain of overhead, and a lot of optimization piece of work is currently performed in social club to forbid unnecessary switches in current operating systems. Switching is completely unnecessary in a language-based protection system, equally all code can safely operate in the aforementioned address space.

- Flexibility. Any protection scheme that can exist designed to be expressed via a programming language tin can be implemented using this method. Changes to the protection scheme (e.g. from a hierarchical organization to a capability-based 1) do non require new hardware.

Disadvantages include:

- Longer application startup time. Applications must be verified when they are started to ensure they take been compiled by the right compiler, or may need recompiling either from source code or from bytecode.

- Inflexible type systems. On traditional systems, applications frequently perform operations that are not type safe. Such operations cannot be permitted in a language-based protection system, which means that applications may demand to be rewritten and may, in some cases, lose performance.

Examples of systems with language-based protection include JX and Microsoft's Singularity.

Process cooperation [edit]

Edsger Dijkstra proved that from a logical point of view, atomic lock and unlock operations operating on binary semaphores are sufficient primitives to express any functionality of process cooperation.[21] Nevertheless this approach is generally held to be lacking in terms of safety and efficiency, whereas a bulletin passing approach is more than flexible.[22] A number of other approaches (either lower- or higher-level) are bachelor too, with many modern kernels providing support for systems such as shared memory and remote procedure calls.

I/O device direction [edit]

The idea of a kernel where I/O devices are handled uniformly with other processes, as parallel co-operating processes, was beginning proposed and implemented by Brinch Hansen (although similar ideas were suggested in 1967[23] [24]). In Hansen'southward description of this, the "common" processes are called internal processes, while the I/O devices are chosen external processes.[22]

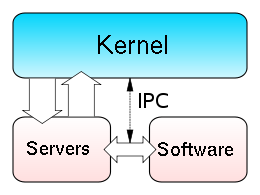

Similar to physical memory, allowing applications direct access to controller ports and registers tin cause the controller to malfunction, or system to crash. With this, depending on the complexity of the device, some devices can become surprisingly complex to plan, and utilize several different controllers. Because of this, providing a more abstract interface to manage the device is important. This interface is normally done past a device commuter or hardware abstraction layer. Ofttimes, applications will require access to these devices. The kernel must maintain the listing of these devices by querying the organisation for them in some mode. This tin can be done through the BIOS, or through i of the various system buses (such as PCI/PCIE, or USB). Using an case of a video commuter, when an application requests an operation on a device, such as displaying a character, the kernel needs to send this request to the electric current agile video commuter. The video driver, in turn, needs to carry out this request. This is an example of inter-process communication (IPC).

Kernel-wide design approaches [edit]

Naturally, the above listed tasks and features tin exist provided in many means that differ from each other in design and implementation.

The principle of separation of mechanism and policy is the substantial difference betwixt the philosophy of micro and monolithic kernels.[25] [26] Hither a mechanism is the support that allows the implementation of many different policies, while a policy is a detail "mode of operation". Example:

- Mechanism: User login attempts are routed to an dominance server

- Policy: Authorization server requires a countersign which is verified against stored passwords in a database

Considering the mechanism and policy are separated, the policy can be easily inverse to e.k. crave the utilize of a security token.

In minimal microkernel merely some very basic policies are included,[26] and its mechanisms allows what is running on top of the kernel (the remaining function of the operating organization and the other applications) to decide which policies to adopt (every bit memory management, high level procedure scheduling, file system management, etc.).[5] [22] A monolithic kernel instead tends to include many policies, therefore restricting the residual of the system to rely on them.

Per Brinch Hansen presented arguments in favour of separation of mechanism and policy.[5] [22] The failure to properly fulfill this separation is one of the major causes of the lack of substantial innovation in existing operating systems,[5] a trouble common in computer architecture.[27] [28] [29] The monolithic design is induced by the "kernel fashion"/"user manner" architectural approach to protection (technically called hierarchical protection domains), which is mutual in conventional commercial systems;[xxx] in fact, every module needing protection is therefore preferably included into the kernel.[30] This link between monolithic design and "privileged mode" tin be reconducted to the cardinal issue of mechanism-policy separation;[5] in fact the "privileged mode" architectural arroyo melds together the protection mechanism with the security policies, while the major culling architectural arroyo, capability-based addressing, conspicuously distinguishes between the two, leading naturally to a microkernel design[5] (see Separation of protection and security).

While monolithic kernels execute all of their code in the same address space (kernel space), microkernels endeavour to run most of their services in user infinite, aiming to amend maintainability and modularity of the codebase.[4] Nearly kernels practice non fit exactly into 1 of these categories, simply are rather constitute in betwixt these 2 designs. These are called hybrid kernels. More than exotic designs such as nanokernels and exokernels are available, but are seldom used for production systems. The Xen hypervisor, for example, is an exokernel.

Monolithic kernels [edit]

Diagram of a monolithic kernel

In a monolithic kernel, all Bone services run along with the principal kernel thread, thus likewise residing in the aforementioned memory expanse. This arroyo provides rich and powerful hardware access. Some developers, such every bit UNIX developer Ken Thompson, maintain that information technology is "easier to implement a monolithic kernel"[31] than microkernels. The principal disadvantages of monolithic kernels are the dependencies betwixt system components – a bug in a device commuter might crash the unabridged organization – and the fact that big kernels can go very difficult to maintain.

Monolithic kernels, which have traditionally been used by Unix-similar operating systems, contain all the operating system cadre functions and the device drivers. This is the traditional design of UNIX systems. A monolithic kernel is i single program that contains all of the lawmaking necessary to perform every kernel-related job. Every part which is to be accessed by almost programs which cannot be put in a library is in the kernel space: Device drivers, scheduler, memory treatment, file systems, and network stacks. Many system calls are provided to applications, to let them to access all those services. A monolithic kernel, while initially loaded with subsystems that may not be needed, can be tuned to a point where information technology is as fast equally or faster than the one that was specifically designed for the hardware, although more relevant in a general sense. Modern monolithic kernels, such as those of Linux (one of the kernels of the GNU operating system) and FreeBSD kernel, both of which autumn into the category of Unix-similar operating systems, feature the ability to load modules at runtime, thereby assuasive piece of cake extension of the kernel's capabilities as required, while helping to minimize the amount of lawmaking running in kernel infinite. In the monolithic kernel, some advantages hinge on these points:

- Since there is less software involved it is faster.

- As it is one unmarried piece of software it should be smaller both in source and compiled forms.

- Less code generally means fewer bugs which tin translate to fewer security problems.

Most work in the monolithic kernel is washed via organisation calls. These are interfaces, usually kept in a tabular structure, that access some subsystem within the kernel such every bit deejay operations. Essentially calls are made within programs and a checked copy of the asking is passed through the system call. Hence, not far to travel at all. The monolithic Linux kernel can exist made extremely pocket-sized non merely considering of its ability to dynamically load modules but as well because of its ease of customization. In fact, at that place are some versions that are pocket-sized plenty to fit together with a large number of utilities and other programs on a single floppy disk and still provide a fully functional operating arrangement (one of the most popular of which is muLinux). This ability to miniaturize its kernel has also led to a rapid growth in the utilise of Linux in embedded systems.

These types of kernels consist of the core functions of the operating system and the device drivers with the power to load modules at runtime. They provide rich and powerful abstractions of the underlying hardware. They provide a pocket-size set of simple hardware abstractions and use applications chosen servers to provide more functionality. This particular approach defines a high-level virtual interface over the hardware, with a set of system calls to implement operating system services such as procedure direction, concurrency and memory direction in several modules that run in supervisor mode. This pattern has several flaws and limitations:

- Coding in kernel can exist challenging, in part considering 1 cannot utilise common libraries (like a full-featured libc), and because one needs to use a source-level debugger like gdb. Rebooting the computer is often required. This is not just a trouble of convenience to the developers. When debugging is harder, and as difficulties go stronger, it becomes more likely that code will exist "buggier".

- Bugs in one part of the kernel accept strong side effects; since every role in the kernel has all the privileges, a problems in one function can corrupt information structure of another, totally unrelated part of the kernel, or of any running program.

- Kernels often become very large and difficult to maintain.

- Even if the modules servicing these operations are separate from the whole, the code integration is tight and hard to practise correctly.

- Since the modules run in the same address infinite, a bug can bring down the entire arrangement.

- Monolithic kernels are not portable; therefore, they must be rewritten for each new architecture that the operating system is to be used on.

In the microkernel approach, the kernel itself only provides bones functionality that allows the execution of servers, separate programs that assume former kernel functions, such every bit device drivers, GUI servers, etc.

Examples of monolithic kernels are AIX kernel, HP-UX kernel and Solaris kernel.

Microkernels [edit]

Microkernel (as well abbreviated μK or united kingdom of great britain and northern ireland) is the term describing an approach to operating system design by which the functionality of the arrangement is moved out of the traditional "kernel", into a set of "servers" that communicate through a "minimal" kernel, leaving as fiddling as possible in "organisation space" and as much as possible in "user space". A microkernel that is designed for a specific platform or device is only ever going to have what it needs to operate. The microkernel approach consists of defining a unproblematic brainchild over the hardware, with a set of primitives or system calls to implement minimal OS services such equally memory management, multitasking, and inter-process communication. Other services, including those normally provided by the kernel, such as networking, are implemented in user-infinite programs, referred to equally servers. Microkernels are easier to maintain than monolithic kernels, but the large number of system calls and context switches might slow down the system because they typically generate more overhead than plainly function calls.

Just parts which actually require being in a privileged way are in kernel space: IPC (Inter-Process Communication), basic scheduler, or scheduling primitives, basic memory handling, bones I/O primitives. Many critical parts are now running in user space: The complete scheduler, memory handling, file systems, and network stacks. Micro kernels were invented as a reaction to traditional "monolithic" kernel design, whereby all system functionality was put in a one static programme running in a special "arrangement" fashion of the processor. In the microkernel, merely the most fundamental of tasks are performed such as existence able to access some (non necessarily all) of the hardware, manage retention and coordinate message passing betwixt the processes. Some systems that use micro kernels are QNX and the HURD. In the case of QNX and Hurd user sessions can be entire snapshots of the system itself or views as it is referred to. The very essence of the microkernel architecture illustrates some of its advantages:

- Easier to maintain

- Patches can be tested in a dissever instance, so swapped in to have over a product instance.

- Rapid development time and new software can be tested without having to reboot the kernel.

- More persistence in general, if ane instance goes haywire, information technology is often possible to substitute it with an operational mirror.

About microkernels use a bulletin passing system to handle requests from ane server to some other. The message passing system generally operates on a port basis with the microkernel. As an case, if a request for more memory is sent, a port is opened with the microkernel and the request sent through. One time within the microkernel, the steps are like to organisation calls. The rationale was that it would bring modularity in the system architecture, which would entail a cleaner arrangement, easier to debug or dynamically modify, customizable to users' needs, and more performing. They are role of the operating systems similar GNU Hurd, MINIX, MkLinux, QNX and Redox OS. Although microkernels are very small past themselves, in combination with all their required auxiliary lawmaking they are, in fact, oft larger than monolithic kernels. Advocates of monolithic kernels also signal out that the two-tiered construction of microkernel systems, in which virtually of the operating system does not interact directly with the hardware, creates a not-insignificant cost in terms of system efficiency. These types of kernels normally provide only the minimal services such every bit defining retentivity address spaces, inter-procedure communication (IPC) and the process management. The other functions such every bit running the hardware processes are not handled direct by microkernels. Proponents of micro kernels betoken out those monolithic kernels have the disadvantage that an mistake in the kernel tin crusade the entire system to crash. Nonetheless, with a microkernel, if a kernel process crashes, information technology is withal possible to foreclose a crash of the organization as a whole by merely restarting the service that caused the error.

Other services provided by the kernel such as networking are implemented in user-space programs referred to as servers. Servers allow the operating organization to be modified by simply starting and stopping programs. For a auto without networking support, for instance, the networking server is non started. The chore of moving in and out of the kernel to motility data between the various applications and servers creates overhead which is detrimental to the efficiency of micro kernels in comparison with monolithic kernels.

Disadvantages in the microkernel exist however. Some are:

- Larger running retention footprint

- More than software for interfacing is required, in that location is a potential for performance loss.

- Messaging bugs can be harder to ready due to the longer trip they take to take versus the 1 off copy in a monolithic kernel.

- Process direction in general tin be very complicated.

The disadvantages for microkernels are extremely context-based. As an example, they work well for small-scale single-purpose (and critical) systems because if not many processes demand to run, then the complications of procedure management are effectively mitigated.

A microkernel allows the implementation of the remaining part of the operating organisation as a normal application programme written in a loftier-level language, and the use of different operating systems on top of the aforementioned unchanged kernel. It is also possible to dynamically switch among operating systems and to have more than than 1 active simultaneously.[22]

Monolithic kernels vs. microkernels [edit]

As the reckoner kernel grows, so grows the size and vulnerability of its trusted computing base; and, besides reducing security, in that location is the problem of enlarging the retentiveness footprint. This is mitigated to some degree by perfecting the virtual retentiveness arrangement, but not all computer architectures have virtual memory back up.[32] To reduce the kernel'due south footprint, extensive editing has to be performed to carefully remove unneeded code, which can be very difficult with not-obvious interdependencies betwixt parts of a kernel with millions of lines of code.

By the early on 1990s, due to the various shortcomings of monolithic kernels versus microkernels, monolithic kernels were considered obsolete by virtually all operating system researchers.[ commendation needed ] As a event, the design of Linux as a monolithic kernel rather than a microkernel was the topic of a famous fence between Linus Torvalds and Andrew Tanenbaum.[33] At that place is merit on both sides of the statement presented in the Tanenbaum–Torvalds contend.

Performance [edit]

Monolithic kernels are designed to have all of their code in the same address space (kernel space), which some developers argue is necessary to increment the functioning of the system.[34] Some developers too maintain that monolithic systems are extremely efficient if well written.[34] The monolithic model tends to be more efficient[35] through the use of shared kernel memory, rather than the slower IPC organisation of microkernel designs, which is typically based on message passing.[ commendation needed ]

The functioning of microkernels was poor in both the 1980s and early on 1990s.[36] [37] However, studies that empirically measured the operation of these microkernels did not analyze the reasons of such inefficiency.[36] The explanations of this data were left to "sociology", with the assumption that they were due to the increased frequency of switches from "kernel-mode" to "user-mode", to the increased frequency of inter-process communication and to the increased frequency of context switches.[36]

In fact, as guessed in 1995, the reasons for the poor functioning of microkernels might also accept been: (one) an actual inefficiency of the whole microkernel arroyo, (2) the particular concepts implemented in those microkernels, and (3) the particular implementation of those concepts. Therefore it remained to exist studied if the solution to build an efficient microkernel was, unlike previous attempts, to utilize the correct construction techniques.[36]

On the other finish, the hierarchical protection domains compages that leads to the design of a monolithic kernel[thirty] has a significant performance drawback each time there's an interaction between different levels of protection (i.eastward., when a procedure has to dispense a data structure both in "user fashion" and "supervisor mode"), since this requires message copying by value.[38]

The hybrid kernel approach combines the speed and simpler design of a monolithic kernel with the modularity and execution safety of a microkernel

Hybrid (or modular) kernels [edit]

Hybrid kernels are used in well-nigh commercial operating systems such every bit Microsoft Windows NT three.ane, NT iii.5, NT 3.51, NT iv.0, 2000, XP, Vista, 7, 8, 8.1 and 10. Apple tree Inc'southward own macOS uses a hybrid kernel called XNU which is based upon code from OSF/1's Mach kernel (OSFMK 7.3)[39] and FreeBSD's monolithic kernel. They are similar to micro kernels, except they include some boosted code in kernel-infinite to increase operation. These kernels represent a compromise that was implemented past some developers to accommodate the major advantages of both monolithic and micro kernels. These types of kernels are extensions of micro kernels with some backdrop of monolithic kernels. Dissimilar monolithic kernels, these types of kernels are unable to load modules at runtime on their own. Hybrid kernels are micro kernels that have some "non-essential" code in kernel-space in order for the code to run more quickly than it would were information technology to be in user-infinite. Hybrid kernels are a compromise between the monolithic and microkernel designs. This implies running some services (such every bit the network stack or the filesystem) in kernel infinite to reduce the functioning overhead of a traditional microkernel, only even so running kernel code (such as device drivers) equally servers in user space.

Many traditionally monolithic kernels are at present at to the lowest degree adding (or else using) the module capability. The most well known of these kernels is the Linux kernel. The modular kernel essentially tin can have parts of it that are congenital into the core kernel binary or binaries that load into memory on demand. It is important to annotation that a code tainted module has the potential to destabilize a running kernel. Many people get confused on this point when discussing micro kernels. Information technology is possible to write a commuter for a microkernel in a completely split up memory space and examination it earlier "going" alive. When a kernel module is loaded, information technology accesses the monolithic portion's retentivity infinite past adding to information technology what it needs, therefore, opening the doorway to possible pollution. A few advantages to the modular (or) Hybrid kernel are:

- Faster evolution time for drivers that can operate from within modules. No reboot required for testing (provided the kernel is non destabilized).

- On demand capability versus spending time recompiling a whole kernel for things like new drivers or subsystems.

- Faster integration of third party technology (related to evolution but pertinent unto itself however).

Modules, generally, communicate with the kernel using a module interface of some sort. The interface is generalized (although particular to a given operating system) so it is non always possible to utilise modules. Often the device drivers may need more flexibility than the module interface affords. Essentially, it is ii system calls and frequently the safety checks that only have to be washed once in the monolithic kernel at present may be washed twice. Some of the disadvantages of the modular approach are:

- With more interfaces to laissez passer through, the possibility of increased bugs exists (which implies more security holes).

- Maintaining modules tin exist confusing for some administrators when dealing with problems similar symbol differences.

Nanokernels [edit]

A nanokernel delegates virtually all services – including even the most basic ones similar interrupt controllers or the timer – to device drivers to make the kernel memory requirement fifty-fifty smaller than a traditional microkernel.[40]

Exokernels [edit]

Exokernels are a still-experimental arroyo to operating system design. They differ from other types of kernels in limiting their functionality to the protection and multiplexing of the raw hardware, providing no hardware abstractions on top of which to develop applications. This separation of hardware protection from hardware management enables application developers to decide how to make the about efficient employ of the bachelor hardware for each specific program.

Exokernels in themselves are extremely minor. However, they are accompanied past library operating systems (come across as well unikernel), providing application developers with the functionalities of a conventional operating system. This comes down to every user writing his ain remainder-of-the kernel from near scratch, which is a very-risky, circuitous and quite a daunting assignment - particularly in a time-constrained production-oriented environment, which is why exokernels accept never caught on.[ citation needed ] A major advantage of exokernel-based systems is that they tin incorporate multiple library operating systems, each exporting a unlike API, for instance one for high level UI evolution and one for existent-time control.

History of kernel evolution [edit]

Early operating system kernels [edit]

Strictly speaking, an operating system (and thus, a kernel) is non required to run a computer. Programs can be directly loaded and executed on the "bare metal" motorcar, provided that the authors of those programs are willing to piece of work without any hardware abstraction or operating organisation support. Most early computers operated this way during the 1950s and early 1960s, which were reset and reloaded between the execution of unlike programs. Somewhen, small ancillary programs such as program loaders and debuggers were left in memory between runs, or loaded from ROM. Every bit these were adult, they formed the basis of what became early on operating system kernels. The "bare metal" arroyo is nevertheless used today on some video game consoles and embedded systems,[41] but in general, newer computers use modern operating systems and kernels.

In 1969, the RC 4000 Multiprogramming Arrangement introduced the system design philosophy of a pocket-size nucleus "upon which operating systems for different purposes could exist built in an orderly manner",[42] what would be chosen the microkernel approach.

Time-sharing operating systems [edit]

In the decade preceding Unix, computers had grown enormously in power – to the point where computer operators were looking for new ways to get people to use their spare time on their machines. One of the major developments during this era was time-sharing, whereby a number of users would become small slices of computer time, at a rate at which it appeared they were each connected to their own, slower, auto.[43]

The evolution of time-sharing systems led to a number of issues. One was that users, particularly at universities where the systems were existence developed, seemed to desire to hack the arrangement to get more CPU time. For this reason, security and admission control became a major focus of the Multics project in 1965.[44] Another ongoing issue was properly handling computing resources: users spent near of their time staring at the final and thinking about what to input instead of actually using the resources of the computer, and a time-sharing system should give the CPU time to an active user during these periods. Finally, the systems typically offered a memory hierarchy several layers deep, and partitioning this expensive resource led to major developments in virtual retentiveness systems.

Amiga [edit]

The Commodore Amiga was released in 1985, and was among the beginning – and certainly most successful – home computers to feature an advanced kernel architecture. The AmigaOS kernel'south executive component, exec.library, uses a microkernel message-passing design, just there are other kernel components, like graphics.library, that have direct admission to the hardware. There is no memory protection, and the kernel is almost always running in user mode. Merely special actions are executed in kernel mode, and user-mode applications tin can inquire the operating system to execute their lawmaking in kernel mode.

Unix [edit]

A diagram of the predecessor/successor family relationship for Unix-like systems

During the design phase of Unix, programmers decided to model every high-level device every bit a file, considering they believed the purpose of computation was information transformation.[45]

For instance, printers were represented as a "file" at a known location – when data was copied to the file, it printed out. Other systems, to provide a similar functionality, tended to virtualize devices at a lower level – that is, both devices and files would exist instances of some lower level concept. Virtualizing the system at the file level allowed users to manipulate the entire system using their existing file management utilities and concepts, dramatically simplifying operation. As an extension of the same paradigm, Unix allows programmers to manipulate files using a serial of small programs, using the concept of pipes, which allowed users to complete operations in stages, feeding a file through a chain of single-purpose tools. Although the end result was the same, using smaller programs in this manner dramatically increased flexibility as well as ease of development and use, allowing the user to modify their workflow by calculation or removing a program from the chain.

In the Unix model, the operating organization consists of ii parts: kickoff, the huge collection of utility programs that drive almost operations; 2d, the kernel that runs the programs.[45] Under Unix, from a programming standpoint, the distinction between the ii is adequately thin; the kernel is a programme, running in supervisor manner,[46] that acts every bit a program loader and supervisor for the small utility programs making up the residual of the system, and to provide locking and I/O services for these programs; beyond that, the kernel didn't arbitrate at all in user infinite.

Over the years the computing model changed, and Unix'south treatment of everything as a file or byte stream no longer was equally universally applicable as it was earlier. Although a terminal could be treated as a file or a byte stream, which is printed to or read from, the aforementioned did not seem to be true for a graphical user interface. Networking posed another problem. Fifty-fifty if network communication tin be compared to file access, the low-level packet-oriented architecture dealt with discrete chunks of data and non with whole files. As the capability of computers grew, Unix became increasingly cluttered with code. It is also because the modularity of the Unix kernel is extensively scalable.[47] While kernels might have had 100,000 lines of code in the seventies and eighties, kernels like Linux, of modern Unix successors similar GNU, have more than 13 1000000 lines.[48]

Mod Unix-derivatives are generally based on module-loading monolithic kernels. Examples of this are the Linux kernel in the many distributions of GNU, IBM AIX, as well as the Berkeley Software Distribution variant kernels such as FreeBSD, DragonflyBSD, OpenBSD, NetBSD, and macOS. Apart from these alternatives, amateur developers maintain an active operating organisation development community, populated by cocky-written hobby kernels which mostly end upward sharing many features with Linux, FreeBSD, DragonflyBSD, OpenBSD or NetBSD kernels and/or beingness compatible with them.[49]

Mac OS [edit]

Apple tree first launched its classic Mac Bone in 1984, bundled with its Macintosh personal computer. Apple moved to a nanokernel pattern in Mac OS 8.6. Confronting this, the modernistic macOS (originally named Mac OS X) is based on Darwin, which uses a hybrid kernel called XNU, which was created by combining the 4.3BSD kernel and the Mach kernel.[50]

Microsoft Windows [edit]

Microsoft Windows was get-go released in 1985 every bit an add-on to MS-DOS. Because of its dependence on some other operating system, initial releases of Windows, prior to Windows 95, were considered an operating environment (not to be confused with an operating system). This production line continued to evolve through the 1980s and 1990s, with the Windows 9x series adding 32-bit addressing and pre-emptive multitasking; just ended with the release of Windows Me in 2000.

Microsoft as well adult Windows NT, an operating system with a very similar interface, merely intended for high-cease and business organisation users. This line started with the release of Windows NT 3.1 in 1993, and was introduced to full general users with the release of Windows XP in Oct 2001—replacing Windows 9x with a completely different, much more sophisticated operating system. This is the line that continues with Windows 11.

The architecture of Windows NT's kernel is considered a hybrid kernel considering the kernel itself contains tasks such every bit the Window Managing director and the IPC Managers, with a customer/server layered subsystem model.[51] It was designed every bit a modified microkernel, every bit the Windows NT kernel was influenced by the Mach microkernel but does not run into all of the criteria of a pure microkernel.

IBM Supervisor [edit]

Supervisory program or supervisor is a computer programme, usually part of an operating organisation, that controls the execution of other routines and regulates piece of work scheduling, input/output operations, fault deportment, and similar functions and regulates the flow of piece of work in a data processing system.

Historically, this term was substantially associated with IBM'south line of mainframe operating systems starting with OS/360. In other operating systems, the supervisor is generally chosen the kernel.

In the 1970s, IBM further abstracted the supervisor country from the hardware, resulting in a hypervisor that enabled full virtualization, i.e. the capacity to run multiple operating systems on the same machine totally independently from each other. Hence the offset such arrangement was called Virtual Machine or VM.

Development of microkernels [edit]

Although Mach, adult by Richard Rashid at Carnegie Mellon University, is the best-known full general-purpose microkernel, other microkernels have been developed with more specific aims. The L4 microkernel family (mainly the L3 and the L4 kernel) was created to demonstrate that microkernels are non necessarily wearisome.[52] Newer implementations such as Fiasco and Pistachio are able to run Linux next to other L4 processes in separate address spaces.[53] [54]

Additionally, QNX is a microkernel which is principally used in embedded systems,[55] and the open-source software MINIX, while originally created for educational purposes, is now focused on being a highly reliable and self-healing microkernel OS.

Come across also [edit]

- Comparison of operating organisation kernels

- Inter-process communication

- Operating organization

- Virtual retentivity

Notes [edit]

- ^ It may depend on the Computer architecture

References [edit]

- ^ a b "Kernel". Linfo. Bellevue Linux Users Group. Archived from the original on 8 Dec 2006. Retrieved 15 September 2016.

- ^ Randal Eastward. Bryant; David R. O'Hallaron (2016). Figurer Systems: A Developer's Perspective (Third ed.). Pearson. p. 17. ISBN978-0134092669.

- ^ cf. Daemon (computing)

- ^ a b Roch 2004

- ^ a b c d e f one thousand Wulf 1974 pp.337–345

- ^ a b Silberschatz 1991

- ^ Tanenbaum, Andrew S. (2008). Modern Operating Systems (3rd ed.). Prentice Hall. pp. 50–51. ISBN978-0-xiii-600663-3.

. . . most all system calls [are] invoked from C programs by calling a library procedure . . . The library procedure . . . executes a TRAP instruction to switch from user way to kernel mode and start execution . . .

- ^ Denning 1976

- ^ Swift 2005, p.29 quote: "isolation, resources control, conclusion verification (checking), and error recovery."

- ^ Schroeder 72

- ^ a b Linden 76

- ^ Stephane Eranian and David Mosberger, Virtual Memory in the IA-64 Linux Kernel Archived 2018-04-03 at the Wayback Auto, Prentice Hall PTR, 2002

- ^ Silberschatz & Galvin, Operating System Concepts, 4th ed, pp. 445 & 446

- ^ Hoch, Charles; J. C. Browne (July 1980). "An implementation of capabilities on the PDP-xi/45". ACM SIGOPS Operating Systems Review. fourteen (three): 22–32. doi:10.1145/850697.850701. S2CID 17487360.

- ^ a b A Linguistic communication-Based Approach to Security Archived 2018-12-22 at the Wayback Machine, Schneider F., Morrissett G. (Cornell Academy) and Harper R. (Carnegie Mellon Academy)

- ^ a b c P. A. Loscocco, S. D. Smalley, P. A. Muckelbauer, R. C. Taylor, South. J. Turner, and J. F. Farrell. The Inevitability of Failure: The Flawed Assumption of Security in Modernistic Computing Environments Archived 2007-06-21 at the Wayback Machine. In Proceedings of the 21st National Data Systems Security Conference, pages 303–314, Oct. 1998. [1] Archived 2011-07-21 at the Wayback Machine.

- ^ Lepreau, Jay; Ford, Bryan; Hibler, Mike (1996). "The persistent relevance of the local operating system to global applications". Proceedings of the 7th workshop on ACM SIGOPS European workshop Systems support for worldwide applications - EW 7. p. 133. doi:10.1145/504450.504477. S2CID 10027108.

- ^ J. Anderson, Computer Security Engineering Planning Written report Archived 2011-07-21 at the Wayback Machine, Air Strength Elect. Systems Div., ESD-TR-73-51, October 1972.

- ^ Jerry H. Saltzer; Mike D. Schroeder (September 1975). "The protection of information in figurer systems". Proceedings of the IEEE. 63 (9): 1278–1308. CiteSeerX10.1.1.126.9257. doi:x.1109/PROC.1975.9939. S2CID 269166. Archived from the original on 2021-03-08. Retrieved 2007-07-15 .

- ^ Jonathan S. Shapiro; Jonathan M. Smith; David J. Farber (1999). "EROS: a fast capability organization". Proceedings of the Seventeenth ACM Symposium on Operating Systems Principles. 33 (5): 170–185. doi:x.1145/319344.319163.

- ^ Dijkstra, E. W. Cooperating Sequential Processes. Math. Dep., Technological U., Eindhoven, Sept. 1965.

- ^ a b c d e Brinch Hansen lxx pp.238–241

- ^ Harrison, Yard. C.; Schwartz, J. T. (1967). "SHARER, a time sharing system for the CDC 6600". Communications of the ACM. 10 (10): 659–665. doi:10.1145/363717.363778. S2CID 14550794. Retrieved 2007-01-07 .

- ^ Huxtable, D. H. R.; Warwick, M. T. (1967). Dynamic Supervisors – their design and construction. pp. xi.1–xi.17. doi:10.1145/800001.811675. ISBN9781450373708. S2CID 17709902. Archived from the original on 2020-02-24. Retrieved 2007-01-07 .

- ^ Baiardi 1988

- ^ a b Levin 75

- ^ Denning 1980

- ^ Jürgen Nehmer, "The Immortality of Operating Systems, or: Is Research in Operating Systems withal Justified?", Lecture Notes In Estimator Scientific discipline; Vol. 563. Proceedings of the International Workshop on Operating Systems of the 90s and Beyond. pp. 77–83 (1991) ISBN iii-540-54987-0 [2] Archived 2017-03-31 at the Wayback Machine quote: "The past 25 years have shown that enquiry on operating system architecture had a minor effect on existing main stream [sic] systems."

- ^ Levy 84, p.1 quote: "Although the complexity of computer applications increases yearly, the underlying hardware architecture for applications has remained unchanged for decades."

- ^ a b c Levy 84, p.1 quote: "Conventional architectures support a single privileged mode of operation. This structure leads to monolithic design; whatever module needing protection must be role of the single operating organisation kernel. If, instead, any module could execute within a protected domain, systems could be congenital as a collection of independent modules extensible by whatsoever user."

- ^ "Open up Sources: Voices from the Open Source Revolution". 1-56592-582-iii. 29 March 1999. Archived from the original on ane February 2020. Retrieved 24 March 2019.

- ^ Virtual addressing is most commonly accomplished through a built-in memory management unit.

- ^ Recordings of the debate between Torvalds and Tanenbaum can be found at dina.dk Archived 2012-ten-03 at the Wayback Auto, groups.google.com Archived 2013-05-26 at the Wayback Machine, oreilly.com Archived 2014-09-21 at the Wayback Car and Andrew Tanenbaum'southward website Archived 2015-08-05 at the Wayback Motorcar

- ^ a b Matthew Russell. "What Is Darwin (and How Information technology Powers Mac OS Ten)". O'Reilly Media. Archived from the original on 2007-12-08. Retrieved 2008-12-09 . quote: "The tightly coupled nature of a monolithic kernel allows information technology to brand very efficient utilize of the underlying hardware [...] Microkernels, on the other manus, run a lot more of the core processes in userland. [...] Unfortunately, these benefits come up at the cost of the microkernel having to laissez passer a lot of data in and out of the kernel infinite through a process known as a context switch. Context switches introduce considerable overhead and therefore upshot in a functioning penalty."

- ^ "Operating Systems/Kernel Models - Wikiversity". en.wikiversity.org. Archived from the original on 2014-12-xviii. Retrieved 2014-12-18 .

- ^ a b c d Liedtke 95

- ^ Härtig 97

- ^ Hansen 73, section 7.three p.233 "interactions betwixt dissimilar levels of protection require transmission of letters by value"

- ^ Magee, Jim. WWDC 2000 Session 106 – Mac OS 10: Kernel. 14 minutes in. Archived from the original on 2021-10-30.

- ^ KeyKOS Nanokernel Architecture Archived 2011-06-21 at the Wayback Automobile

- ^ Ball: Embedded Microprocessor Designs, p. 129

- ^ Hansen 2001 (bone), pp.17–18

- ^ "BSTJ version of C.ACM Unix paper". bell-labs.com. Archived from the original on 2005-12-30. Retrieved 2006-08-17 .

- ^ Introduction and Overview of the Multics System Archived 2011-07-09 at the Wayback Motorcar, past F. J. Corbató and V. A. Vissotsky.

- ^ a b "The Single Unix Specification". The open group. Archived from the original on 2016-10-04. Retrieved 2016-09-29 .

- ^ The highest privilege level has various names throughout unlike architectures, such equally supervisor mode, kernel mode, CPL0, DPL0, ring 0, etc. Run into Ring (figurer security) for more data.

- ^ "Unix'south Revenge". asymco.com. 29 September 2010. Archived from the original on 9 November 2010. Retrieved two Oct 2010.

- ^ Linux Kernel 2.half-dozen: It'due south Worth More than! Archived 2011-08-21 at WebCite, by David A. Wheeler, Oct 12, 2004

- ^ This community mostly gathers at Bona Fide OS Development Archived 2022-01-17 at the Wayback Machine, The Mega-Tokyo Message Board Archived 2022-01-25 at the Wayback Machine and other operating organization enthusiast web sites.

- ^ XNU: The Kernel Archived 2011-08-12 at the Wayback Machine

- ^ "Windows - Official Site for Microsoft Windows ten Home & Pro Bone, laptops, PCs, tablets & more than". windows.com. Archived from the original on 2011-08-20. Retrieved 2019-03-24 .

- ^ "The L4 microkernel family - Overview". bone.inf.tu-dresden.de. Archived from the original on 2006-08-21. Retrieved 2006-08-xi .

- ^ "The Fiasco microkernel - Overview". os.inf.tu-dresden.de. Archived from the original on 2006-06-16. Retrieved 2006-07-10 .

- ^ Zoller (inaktiv), Heinz (7 December 2013). "L4Ka - L4Ka Project". www.l4ka.org. Archived from the original on 19 April 2001. Retrieved 24 March 2019.

- ^ "QNX Operating Systems". blackberry.qnx.com. Archived from the original on 2019-03-24. Retrieved 2019-03-24 .

Sources [edit]

- Roch, Benjamin (2004). "Monolithic kernel vs. Microkernel" (PDF). Archived from the original (PDF) on 2006-xi-01. Retrieved 2006-10-12 .

- Silberschatz, Abraham; James L. Peterson; Peter B. Galvin (1991). Operating system concepts. Boston, Massachusetts: Addison-Wesley. p. 696. ISBN978-0-201-51379-0.

- Ball, Stuart R. (2002) [2002]. Embedded Microprocessor Systems: Existent Globe Designs (first ed.). Elsevier Science. ISBN978-0-7506-7534-five.

- Deitel, Harvey M. (1984) [1982]. An introduction to operating systems (revisited first ed.). Addison-Wesley. p. 673. ISBN978-0-201-14502-one.

- Denning, Peter J. (December 1976). "Fault tolerant operating systems". ACM Computing Surveys. 8 (4): 359–389. doi:10.1145/356678.356680. ISSN 0360-0300. S2CID 207736773.

- Denning, Peter J. (Apr 1980). "Why not innovations in computer compages?". ACM SIGARCH Computer Architecture News. 8 (2): 4–7. doi:x.1145/859504.859506. ISSN 0163-5964. S2CID 14065743.

- Hansen, Per Brinch (April 1970). "The nucleus of a Multiprogramming Organization". Communications of the ACM. thirteen (4): 238–241. CiteSeerXten.1.1.105.4204. doi:10.1145/362258.362278. ISSN 0001-0782. S2CID 9414037.

- Hansen, Per Brinch (1973). Operating Organization Principles. Englewood Cliffs: Prentice Hall. p. 496. ISBN978-0-xiii-637843-iii.

- Hansen, Per Brinch (2001). "The evolution of operating systems" (PDF). Archived (PDF) from the original on 2011-07-25. Retrieved 2006-10-24 . included in book: Per Brinch Hansen, ed. (2001). "ane The development of operating systems". Classic operating systems: from batch processing to distributed systems. New York: Springer-Verlag. pp. 1–36. ISBN978-0-387-95113-3.

- Hermann Härtig, Michael Hohmuth, Jochen Liedtke, Sebastian Schönberg, Jean Wolter The functioning of μ-kernel-based systems Archived 2020-02-17 at the Wayback Car, Härtig, Hermann; Hohmuth, Michael; Liedtke, Jochen; Schönberg, Sebastian (1997). "The performance of μ-kernel-based systems". Proceedings of the sixteenth ACM symposium on Operating systems principles - SOSP '97. p. 66. CiteSeerXten.i.1.56.3314. doi:ten.1145/268998.266660. ISBN978-0897919166. S2CID 1706253. ACM SIGOPS Operating Systems Review, 5.31 n.v, p. 66–77, December. 1997

- Houdek, M. E., Soltis, F. G., and Hoffman, R. L. 1981. IBM Arrangement/38 support for capability-based addressing. In Proceedings of the eighth ACM International Symposium on Estimator Architecture. ACM/IEEE, pp. 341–348.

- Intel Corporation (2002) The IA-32 Architecture Software Developer'southward Transmission, Book 1: Basic Architecture

- Levin, R.; Cohen, Due east.; Corwin, Westward.; Pollack, F.; Wulf, William (1975). "Policy/mechanism separation in Hydra". ACM Symposium on Operating Systems Principles / Proceedings of the 5th ACM Symposium on Operating Systems Principles. 9 (5): 132–140. doi:10.1145/1067629.806531.

- Levy, Henry Thou. (1984). Capability-based computer systems. Maynard, Mass: Digital Press. ISBN978-0-932376-22-0. Archived from the original on 2007-07-xiii. Retrieved 2007-07-18 .

- Liedtke, Jochen. On µ-Kernel Structure, Proc. 15th ACM Symposium on Operating Organization Principles (SOSP), December 1995

- Linden, Theodore A. (December 1976). "Operating Organisation Structures to Back up Security and Reliable Software". ACM Calculating Surveys. eight (4): 409–445. doi:10.1145/356678.356682. hdl:2027/mdp.39015086560037. ISSN 0360-0300. S2CID 16720589. , "Operating System Structures to Support Security and Reliable Software" (PDF). Archived (PDF) from the original on 2010-05-28. Retrieved 2010-06-19 .

- Lorin, Harold (1981). Operating systems. Boston, Massachusetts: Addison-Wesley. pp. 161–186. ISBN978-0-201-14464-2.

- Schroeder, Michael D.; Jerome H. Saltzer (March 1972). "A hardware architecture for implementing protection rings". Communications of the ACM. 15 (3): 157–170. CiteSeerXx.1.ane.83.8304. doi:10.1145/361268.361275. ISSN 0001-0782. S2CID 14422402.

- Shaw, Alan C. (1974). The logical design of Operating systems. Prentice-Hall. p. 304. ISBN978-0-xiii-540112-v.

- Tanenbaum, Andrew South. (1979). Structured Calculator Organization. Englewood Cliffs, New Bailiwick of jersey: Prentice-Hall. ISBN978-0-xiii-148521-ane.

- Wulf, W.; East. Cohen; W. Corwin; A. Jones; R. Levin; C. Pierson; F. Pollack (June 1974). "HYDRA: the kernel of a multiprocessor operating system" (PDF). Communications of the ACM. 17 (6): 337–345. doi:10.1145/355616.364017. ISSN 0001-0782. S2CID 8011765. Archived from the original (PDF) on 2007-09-26. Retrieved 2007-07-18 .

- Baiardi, F.; A. Tomasi; M. Vanneschi (1988). Architettura dei Sistemi di Elaborazione, volume i (in Italian). Franco Angeli. ISBN978-88-204-2746-vii. Archived from the original on 2012-06-27. Retrieved 2006-x-x .

- Swift, Michael M.; Brian Northward. Bershad; Henry Chiliad. Levy. Improving the reliability of commodity operating systems (PDF). Archived (PDF) from the original on 2007-07-nineteen. Retrieved 2007-07-xvi .

- Gettys, James; Karlton, Philip L.; McGregor, Scott (1990). "Improving the reliability of commodity operating systems". Software: Practice and Experience. xx: S35–S67. doi:x.1002/spe.4380201404. S2CID 26329062. Retrieved 2010-06-19 .

- Michael M. Swift; Brian N. Bershad; Henry M. Levy (Feb 2005). "Improving the reliability of commodity operating systems". ACM Transactions on Computer Systems (TOCS). Association for Computing Mechanism. 23 (ane): 77–110. doi:ten.1145/1047915.1047919. eISSN 1557-7333. ISSN 0734-2071. S2CID 208013080.

Further reading [edit]

- Andrew Tanenbaum, Operating Systems – Design and Implementation (Third edition);

- Andrew Tanenbaum, Modernistic Operating Systems (2d edition);

- Daniel P. Bovet, Marco Cesati, The Linux Kernel;

- David A. Peterson, Nitin Indurkhya, Patterson, Reckoner Organization and Design, Morgan Koffman (ISBN i-55860-428-6);

- B.S. Chalk, Calculator System and Compages, Macmillan P.(ISBN 0-333-64551-0).

External links [edit]

- Detailed comparing between nearly pop operating system kernels

DOWNLOAD HERE

Posted by: starneshinticts.blogspot.com

Post a Comment for "Computer Systems A Programmer's Perspective 3rd Edition Pdf Download UPDATED"